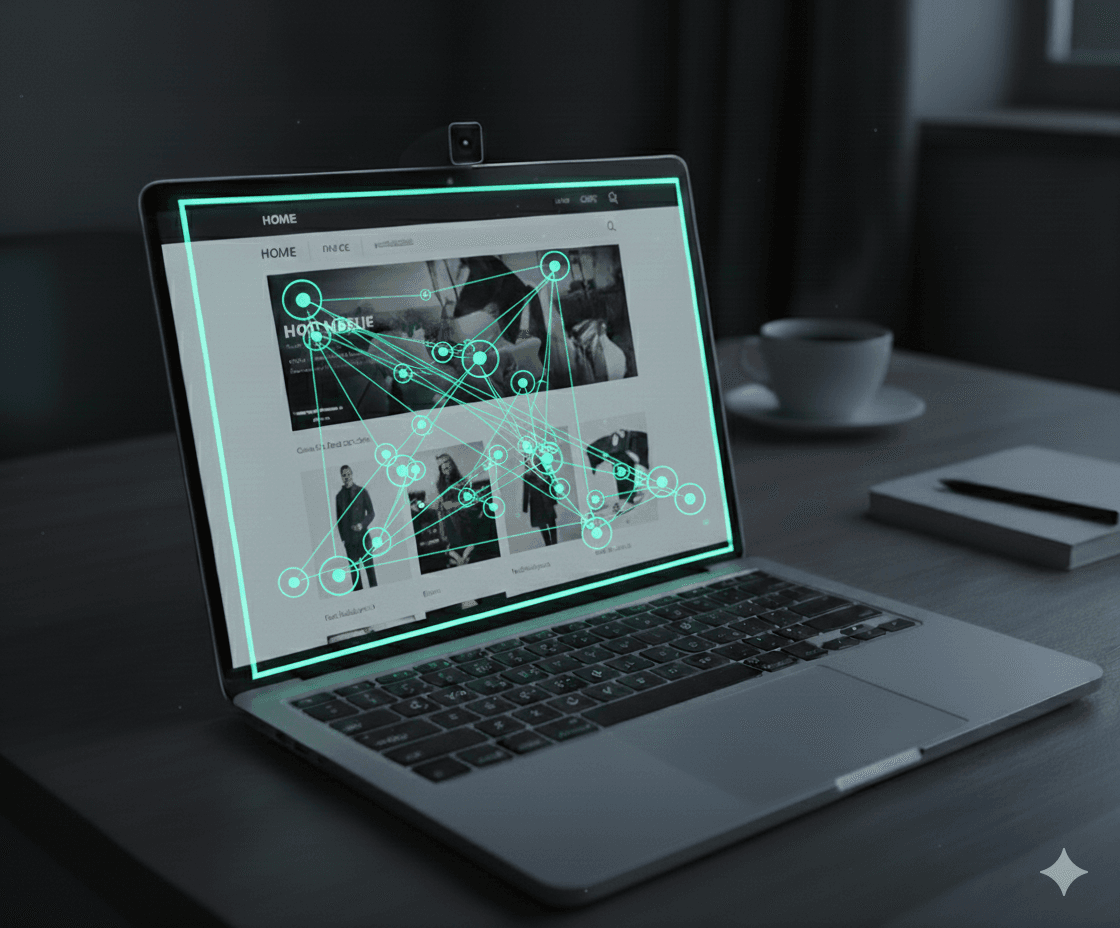

Precision eye tracking from any webcam

Capture high-fidelity gaze signals without hardware, plugins, or installs.

For teams who

Measure human attention at scale

Understand human behaviour by measuring where people look during online tasks.

Academic research

Measure attention, memory, and decision-making in scalable online studies.

Marketing & advertising

Test landing pages and creative. Quantify what gets noticed to improve messaging and conversion.

Product & UX

Validate layouts and information hierarchy. See which elements users miss, ignore, or fixate on.

How it works

Launch a study in minutes

Chiasm stays out of the way—integrate once, run anywhere.

Design your study

Build your experiment in jsPsych, Qualtrics, or any browser platform.

Integrate Chiasm

Add a lightweight script to your workflow to start capturing gaze data.

Analyze results

Export or stream clean, timestamped data for analysis or reporting.

Why Chiasm

Eye tracking that fits your workflow

Built for speed, scale, and seamless integrations.

Easy setup

Large-scale, diverse data

Seamless integration

FAQ

Frequently asked questions

A few quick answers to set expectations up front.

How accurate is Chiasm’s webcam eye tracking?

Accuracy depends on webcam quality, lighting, head movement, and screen setup. Under favorable conditions, Chiasm is designed to approach the performance of hardware-based eye trackers. In preliminary internal evaluations, we observed a mean gaze offset (accuracy) of ~1.5° of visual angle and a mean spatial precision of ~1.0° of visual angle.

Which browsers and devices are supported?

Currently, Chiasm works best in Google Chrome. We plan to support all modern browsers over time. Chiasm runs on laptops and desktops equipped with a webcam.

How does integration work?

After sign-up, you’ll get an API token (a secret key used to connect your study and track usage), then add a lightweight JavaScript snippet to your study flow (jsPsych, Qualtrics, or custom). Participants calibrate, then your study runs as usual while gaze data is captured.

What kind of data do I get?

You get timestamped gaze data (x,y coordinates) linked to your custom study events/segments, plus session metadata such as screen resolution/size and calibration data.

Can I stream data to my own server?

Yes—if your study runs on your own setup, Chiasm can send gaze data directly to your server (including a local computer or your own cloud server). If you’re using a platform like Qualtrics, the data is stored on Chiasm’s servers and you export it afterward.

How does pricing work?

Pricing is usage-based: you only pay for the eye-tracking data you choose to process. You can enable eye tracking only for specific parts of a study (events/segments), and you won’t be charged for periods where gaze data isn’t needed—such as instructions, breaks, or questionnaires.

How is usage tracked and monitored?

Usage is tracked per API token (a secret key that identifies which study/app is sending data). In the dashboard, you can assign time credits to tokens for each study and monitor usage as your study runs.

Do you store video?

By default, Chiasm does not store webcam video. We process frames in real time to produce derived gaze signals and session metadata. If you explicitly opt in, we may store limited calibration/validation recordings for internal quality improvement of the eye-tracking system.

Is processing done on-device (edge) or in the cloud?

Chiasm uses cloud-based processing: webcam frames are streamed to the cloud and processed in real time, without being stored. This enables consistent processing and quality control across devices.

Is Chiasm GDPR compliant?

Yes. We carefully follow GDPR requirements in our processes and data-handling protocols. For details, see our privacy policy.

Request demo

Let's talk

Tell us about your study and we’ll help you launch quickly. Typical response time: 1 business day.